Executive Summary

Enterprises already depend on hundreds of integrations across APIs, events, and data pipelines, each one evolving independently. AI agents and MCP servers are multiplying these surfaces exponentially. The only scalable path forward is trainability: integrations designed to be retrained whenever change happens. ChatINT.ai is the first platform built for this reality, and its core innovation is turning retraining into a practical, repeatable capability for enterprise systems.

Technical Detail

ChatINT.ai is a semantic alignment engine that creates, maintains, and evolves system-to-system connections through trainable, adaptive and deterministic integration models. It enables enterprises to keep integrations aligned and operational as systems change by using Living Domain Contracts, continuous model retraining based on updated artifacts, and customizable capabilities like the Trainable API. ChatINT.ai ensures semantic alignment and operational interoperability across APIs, events, queues, file systems, and other integration types.

Platform Readiness

We've built a fully functioning platform well beyond the MVP stage, already capable of live retraining against real API and schema changes.

IP Protection

We are defining a new market category with registered trademarks — Trainable Integrations®, Trainable API®, and Living Domain Contracts™.

Rocket Ship Potential

This is not a linear growth play. Success here redefines how integrations happen across industries, establishing a new paradigm for enterprise-scale connectivity.

Strategic Readiness

Capital efficient build without outside investment. Now achieving SOC2 compliance and performance hardening for Global 2000 readiness.

Core Concepts

Trainable Integrations® & the Trainable API®

Static integrations are like concrete — every change means breaking and re‑pouring. Trainable Integrations are built to be retrained, so you can reshape without starting over. With the Trainable API, the consumer defines exactly how they want data delivered, and retraining adapts the integration to match — flipping the burden from consumer to integration.

Trainable Integrations® are dynamic, self-adapting connections between systems that continue to evolve as their environments change. ChatINT.ai generates integration models directly from artifacts like APIs, schemas, and sample data, enabling rapid retraining as systems shift. A specialized capability, the Trainable API®, delivers responses in the form consumers require, reducing time, effort, and cost across integration projects. Retraining happens entirely within ChatINT.ai's semantic layer, it never modifies provider or consumer systems, but continuously realigns them through a shared, living model of meaning.

Living Domain Contracts™

Systems change — APIs shift, schemas evolve, file formats get updated. Static specifications expire the moment they're published. Living Domain Contracts make integrations retrainable and realignable on demand, so when change happens, you adjust quickly without a rebuild.

Living Domain Contracts are continuously retrained models that keep integrations aligned as systems evolve, covering APIs, event topics, queues, and file structures. These contracts are realized and enforced through adaptive integration models, which evolve as systems and artifacts change. Unlike static specifications, Living Domain Contracts evolve through retraining by ChatINT.ai in response to domain changes. When a system's interface changes, ChatINT.ai retrains using the updated contract and adapts the integration model accordingly. Living Domain Contracts function as semantic alignment models, not physical schema definitions.

Deterministic Training & Execution

ChatINT.ai is built on a deterministic algorithmic core. Two guarantees follow. Deterministic training: the same artifacts always produce the same model. Deterministic execution: the same payload, run against the same model and Living Domain Contract, always produces the same result. Every retraining is reproducible. Every transformation is repeatable.

ChatINT.ai's training and runtime are algorithmic, not generative — given the same inputs, the trainer and engine compute, they don't sample. Deterministic Training: a fixed set of integration artifacts — API specs, schemas, examples, contracts — produces the same model on every run, on any machine, at any time. Deterministic Execution: a fixed input payload, model, and Living Domain Contract produces the same output payload on every run. This is what makes retraining safe to deploy in production: the behavior of a retrained model is fully defined by its inputs, fully testable, and fully reproducible — auditable in regulated environments where probabilistic, LLM-based approaches cannot meet the bar.

How is this problem solved today?

Current approaches — custom code, middleware, gateways, schema translation tools — are all static snapshots. They work until something changes. Then you pay the tax again. The future belongs to integrations you can retrain on demand.

Technical Detail

How ChatINT.ai Solves the Domain Mismatch Problem

ChatINT.ai aligns systems at the model level and keeps them aligned through retraining. When things change, you retrain — not rebuild. That turns integration from a brittle project into a flexible Enterprise Capability.

Technical Detail

Total Addressable Market (TAM)

Interoperability is a distributed economic layer approaching $1 trillion annually. It is not a niche software category, but a structural IT condition driven by compounding system density and independent domain evolution. [Read Analysis]

1. The 2024 Foundation ($420B–$495B)

The Integration Economy spans vendor software, global services, and the massive "hidden" market of internal enterprise engineering. Industry benchmarks place recurring IT maintenance spend at 55–80% of total budgets—the primary driver of the Integration Tax.

| Market Pillar | 2024 Est. ($B) | Description |

|---|---|---|

| Software Vendor Layer | ~$50B | iPaaS, API Management, Data Integration, and Event Streaming tools. |

| SI Services | ~$272B | Integration-attributable share of the $553B System Integration market. |

| Internal Engineering | ~$135B | Bespoke build and manual alignment capacity within enterprise IT teams. |

| Total Foundation | $420B–$495B | Conservative deduplicated base for 2024. |

2. The Acceleration to $1T (2030)

By 2030, the Integration Economy is projected to reach $0.77T–$1.05T. This growth is driven by two distinct dynamics:

- • Structural Baseline (~$710B): Organic growth of existing enterprise systems and connectivity density.

- • AI/MCP Multiplier (~$300B): Strong acceleration (+4–5 pts CAGR) driven by task-specific AI agents and MCP endpoint proliferation.

Market Projection (2030)

Modeling endpoint proliferation from Agent embedding and MCP adoption.

- • Structural Baseline: ~$710B

- • Moderate Acceleration: ~$840B

- • Strong Acceleration: ~$1,050B

3. The Trainable Integrations™ Economy (TIE)

The TIE represents the specific portion of the Integration Economy structurally exposed to semantic translation and addressable by ChatINT.ai. It includes the entire Integration Tax (maintenance) and the semantic-driven share of new builds. [Full Economic Model]

Addressable TIE (2030)

The market where Retraining Replaces Rebuilding.

The Shift is Inevitable

Every enterprise already depends on thousands of integrations — each one a translation between systems that never stop changing. As the number of systems increases, the potential interactions grow combinatorially, not linearly. Static integrations freeze meaning at the moment they're built, so every update to an API, schema, or data model fractures alignment and triggers costly rebuilds. Global integration spend already exceeds $420B–$495B and is accelerating toward ~$1T by 2030. [Read Thesis]

At the same time, AI functions as an API-creation engine and the Model Context Protocol (MCP) acts as a connection multiplier, embedding schemas in every exchange and expanding the integration surface across enterprises. The result is exponential growth in endpoints, contracts, and change velocity — and with it, the Integration Tax that drains enterprise time and capital. Static methods cannot absorb this rate of change.

Trainable Integrations® resolve this structural failure by turning rebuilding into retraining. They convert rework into a controlled, repeatable adaptation loop.

Vision

Enterprises will treat integration as an always-on capability. Integration stops being a bottleneck and becomes the fabric of scale: every new system, every new API, every new data stream connects once and keeps pace with change forever.

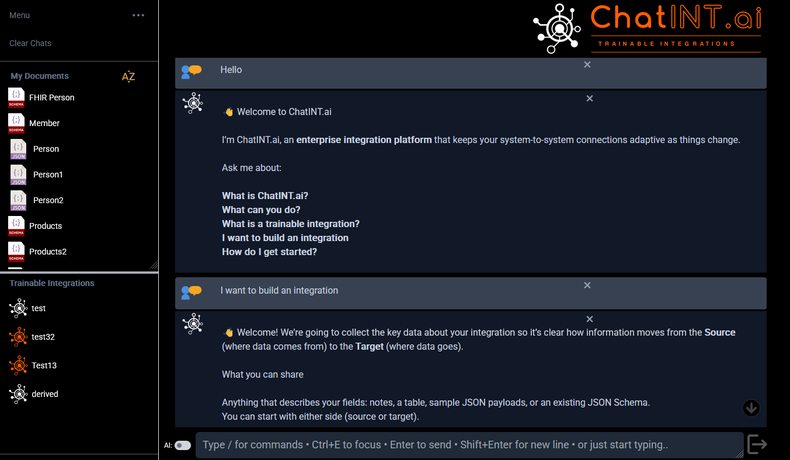

The "Holy Sh*t" Moment

It happens the first time someone sees a live retraining. An API or schema changes, and in minutes the integration adapts. What once required weeks of breakage collapses into a single controlled step.

Investor Strategic Plan

$25M Investment Strategy

A $25M investment validates inevitability by embedding ChatINT.ai inside the organizations where Trainable Integrations are most urgent and most visible. These include Global 2000 enterprises, government agencies, and strategic sovereign funds.

Use of Proceeds

- • FDE Program: Deploy engineers directly into partner environments to solve live integration problems.

- • Strategic Beta: Fund targeted Beta Programs with global enterprises and public-sector organizations.

- • Scale Readiness: Achieve SOC2, performance hardening, and engineering capacity for massive workloads.

Is $25M enough to achieve your goals?

$25M is the capital required to validate inevitability. Its purpose is to demonstrate inevitability in practice and establish the reference points that unlock larger rounds of capital. Success in these accounts establishes inevitability — and defines the category.

Deep Dives & Insights

Explore our latest research on the shift toward retrainable enterprise infrastructure, the hidden costs of integration, and the future of interoperability.

The Trainable Integrations™ Economy

Quantifying the $420B–$495B Integration Tax and the structural shift toward retraining as interoperability density approaches $1T.

The Inevitability of Trainable Integrations

Analyzing the structural failure of static translations and why the market is forced toward retrainable models.

MCP & The Integration Tax

How the Model Context Protocol is multiplying connectivity while exposing the high cost of legacy translation layers.

The End of Static Integrations

Why the dawn of a new market category is inevitable as enterprises reach the limits of static integration technology.

The Flaws of Dumb Pipes

A strategic analysis of IBM's $11B purchase of Confluent and the architectural limits of transport-only infrastructure.

Founder - Duane Lall

Duane Lall has solved the core technical and architectural challenges of static integration by designing, building, and delivering the ChatINT.ai platform. The platform is functional, demo-ready, and proves that a trainable, adaptive model for enterprise integration is not just a concept, but an operational reality.

Key Accomplishments

- ✓ Delivered a Functional Platform: Built the core platform, which is currently demo-ready. It can perform a live retraining, adapting to real API and schema changes in minutes.

- ✓ Shipped Core Technical Constructs: Defined and implemented the foundational intellectual property: Trainable Integrations, The Trainable API, and Living Domain Contracts.

- ✓ Established Market Viability: Developed the comprehensive TAM model showing the 2024 global integration spend at $420B–$495B, projected to ~$1T by 2030.

- ✓ Proof & Credibility: Validated through a suite of over 4700+ automated tests, with hundreds dedicated specifically to integration models across a wide selection of business domains

- ✓ First Live Truth Layer in the Industry: Shipped a public, agent-verifiable truth layer where AI agents read ChatINT.ai's claims and verification protocol from the MCP server, run the protocol against the live engine, and report conclusions grounded in cited observations. ChatINT.ai is the first integration engine to publicly expose this evidence layer. The category shifts from claim-based marketing to evidence-on-demand.

Direct Contact

ChatINT.ai is building the future of enterprise system-to-system connections. Connect with us directly to discuss investment, partnerships, or our beta program.